Video streaming and delivery is a resource intensive process. This is attributed to the various networks a video stream must pass through as well as the quality of the video, as higher bitrates and resolutions require more information related to that stream to be sent to the end viewer. As a result of this requirement, it’s not recommended to broadcast video using your own server. For companies, this can result in bottlenecks from the servers hosting or unnecessary costs to scale a server infrastructure.

One solution to avoid both, though, is through utilizing a CDN (content delivery network). This article talks about the basics of delivering content over the Internet before why it’s important to have a CDN when streaming video content.

- The delivery process

- Latency

- The streaming video process and the delivery hurdle

- The solution: content delivery networks (CDNs)

- What is a CDN?

- Going beyond a CDN

If you are already familiar with CDNs and would rather learn more about how IBM Watson Media offers a more robust solution for video streaming, read our live video scalability white paper.

The Delivery Process

Before talking about what CDNs are, it helps to go over the delivery process to understand how they might help.

Assets of various sizes, from images to video streams, are delivered from a computer or server to a receiving device. For efficiency, this content is “cut up” into packets. Those packets will then be assembled by the receiving device. So while the video stream might feel like a single asset to the end viewer, it’s actually a culmination of many packets (or video chunks, which will be explained later) in order to deliver that content. How long this process takes depends in part on the physical distance between those connections. While data can be sent at the speed of light, generally there are routing decisions and conversions occurring that impact speed, for example converting from light to electric signals. This delay aspect is referred to as latency.

Latency

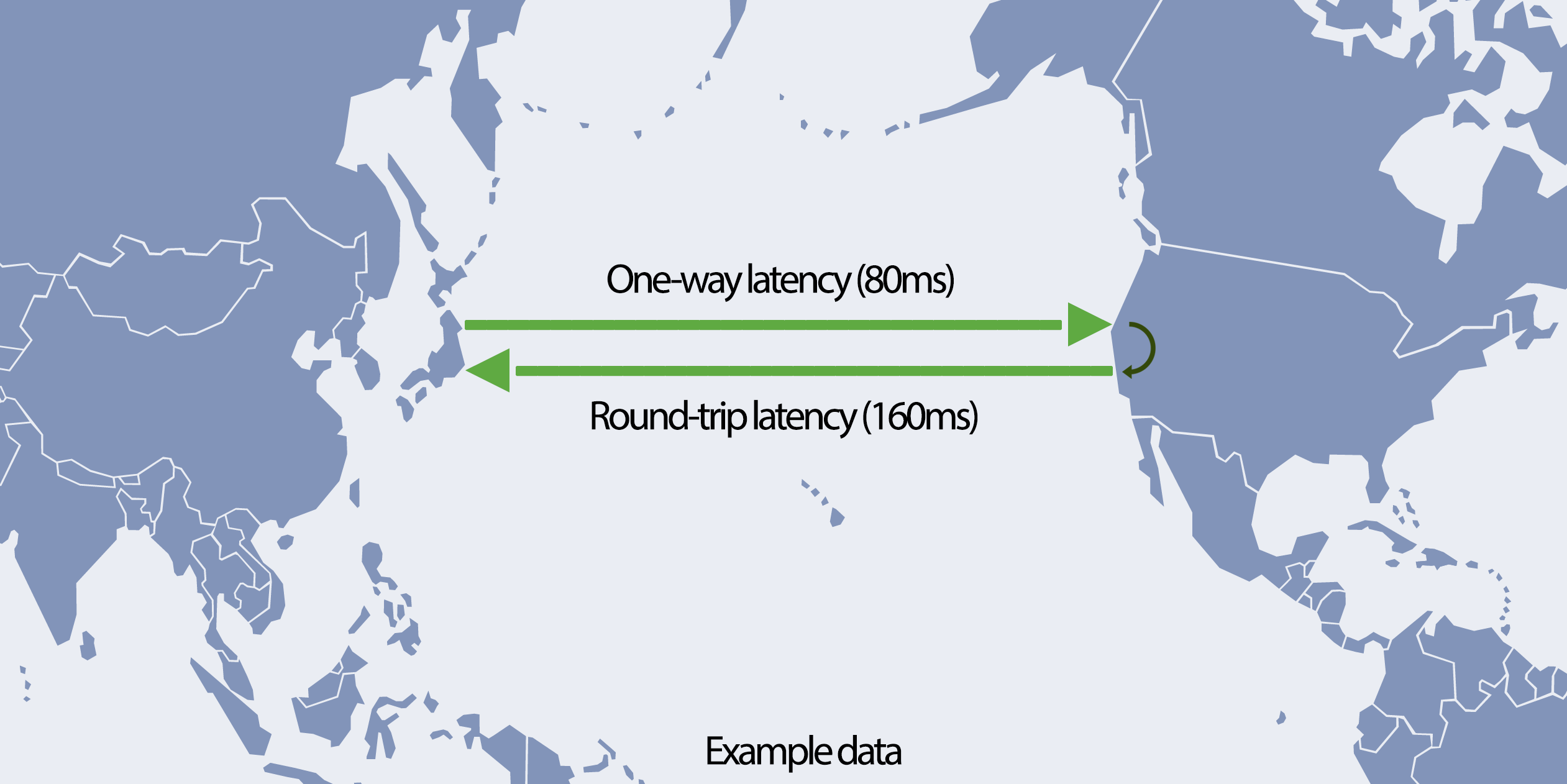

Boiling down the concept of latency, it will take someone from the United States longer to access content from a server located in Japan than it would accessing that content from the same type of server located in the United States. Delivering content is a two way process as well. For example, one packet traveling from Japan to the United States might take 80ms (milliseconds) from a process called one-way latency. To cross over and back, called round-trip latency, takes twice as much time at around 160ms. This process likely sounds very fast, and it is, but when factoring in the volume of packets being sent, which can be in the billions, latency can become an issue. Both sources have to be in constant communication as well. It’s not uncommon for a few packets or even a series of packets to be lost as part of the transmission process. Packet loss occurs even more frequently over wireless networks as well. Consequently, there needs to be communication between the two about which packets need to be resent.

Consequently, latency is an issue services on the Internet have to deal with one way or another. Generally speaking, lower latency gives an improved user experience. The lower the latency, the more improved and faster the communication is. The path is also more tolerant to network glitches. This tolerance is because of faster recovery from random packet loss due to the communicating parties being able to quickly figure out where they left off in regards to a missing packet. This process of communication back and forth is called the Transmission Control Protocol (TCP).

The streaming video process and the delivery hurdle

Streaming video content is resource intensive for both the sender and receiver. In order to deliver a smooth video stream, a constant flow of data is required between both participants. To help facilitate this, an approach was devised to divide packets into various chunks. Often called video chunks, these can be encrypted and decrypted independently. These chunks can also be assembled back-to-back to form the “original” packet. This gives video streaming a bit more flexibility, especially as so many packets are required to form a video.

To aid further in this process, video chunks are generally preloaded before playback begins. This process is called buffering and is found on video streaming, including live streams which will have a few seconds of delay to accommodate this. Despite the word buffering having a negative association, the technology aids in minimizing playback disruptions. Rather than the video halting each time a video chunk is lost, the player can instead playback from the preloaded video chunks while it attempts to recover the missing one.

As mentioned, though, there is a clear negative association with the process of buffering. Once the spinning wheel appears, denoting that the player is preloading more video chunks due to reaching a point in playback where the appropriate video chunk was not received in time, it’s very likely for viewers to become frustrated and abandon the content they had originally planned to watch. Consequently, any methods that allow for reducing latency to speed up delivery and avoid the video player reaching that missing video chunk before the preloaded chunks are used is a major benefit.

The solution: content delivery networks (CDNs)

To address this need to reduce latency, CDNs were created. While CDNs address a multitude of other issues, such as helping transit providers and ISPs to handle traffic, from an end user perspective what matters is: how fast the web page or application responds and whether the audio/video playback is constant or not.

CDNs are great at solving this problem to help improve the user experience. In a nutshell, and among many other benefits, they aim to reduce the physical distance between the user trying to receive the content and the server sending those packets or chunks.

What is a CDN?

A CDN is a large network of servers that have copies of data, pulled from an origin server. The servers are generally geographically diverse in their locations. The user or organization will then pull the needed resources (content) from the server that is closest to them, which is called an edge server. For example, let’s say there is an edge server in Los Angeles and an edge server in New York. If an end user then comes from San Francisco, normally the Los Angeles server will be the utilized edge server.

This process of selecting an edge server for the viewer can be handled in various ways. Among the several different techniques used is a method called anycast, which decides topologically where the user will connect. Another technique is handling request routing at the DNS (Domain Name System) request level. Based on the geographical location of the resolver, a geographically close edge will be sent in the response and the client will connect to that edge to obtain content. There is also a more advanced method where the actual IP address of the end user is passed along by the DNS resolver and the closest edge server will be determined based on the actual IP of the client.

Based on the use case, edge servers also employ a technique called proxy caching. This process stores content on the server itself in order to share those resources among incoming requests. So when a request comes into an edge server, that server will first check to see if the content requested was cached there recently. If the content is in the cache, it is served directly. If the content is not present, or the cached resource has expired, the edge server will request it from the origin server (or an upstream midgress cache, that has the content).

Going beyond a CDN

Utilizing a content delivery network brings with it a lot of benefits for multiple types of content available over the Internet. Video streaming, being one of the more resource intensive types of content available, benefits even more from utilizing this approach.

However, sometimes a single CDN approach is not enough. As a result IBM Watson Media offers two additional measures to help form a complete solution for delivering video content at scale.

The first of these is called QoS (quality of service) approach using multiple CDNs for improved performance. Through spanning a higher-level orchestration layer on top of these existing lower-level techniques it is possible to reach even more granular, more accurate traffic control with load balancing and quality assurance while the business logic remains 100% server side.IBM patented this solution and it is in use for content delivery decisions on the platform. To learn more about the process, read our live video scalability white paper.

While multiple CDNs are used for global streaming, another approach is aimed at addressing the unique challenges faced by enterprises. This challenge is a local issue, owed to the fact that local download speed is a limited commodity. It can easily get bottlenecked when a large numbers of employees try to access a video asset at the same time. A perfect use case for this issue would be an all hands, town hall meeting. Called ECDN (Enterprise Content Delivery Network), this add-on allows organizations to essentially create their own edge server at a desired location. That location can be a company headquarters, large office, or anywhere else where the a virtual server can be installed. As a result of this technology, live streams and on-demand video assets can be cached on site so they then scale to meet internal needs.

IBM’s video streaming solutions utilize both of these methods to deliver content more effectively than a single CDN connection.

Want to learn more about these services and IBM’s technology? Discover what our live streaming platform and enterprise video platform are capable of. Alternatively, contact us directly for more details.