With the news moving at lightning speeds, consumers are more tuned into current events than ever while media companies are challenged to keep pace. Broadcast networks are under intense pressure to respond quickly to breaking news, world events, and sporting games in order to satisfy consumer demand for instant, quality digital experiences.

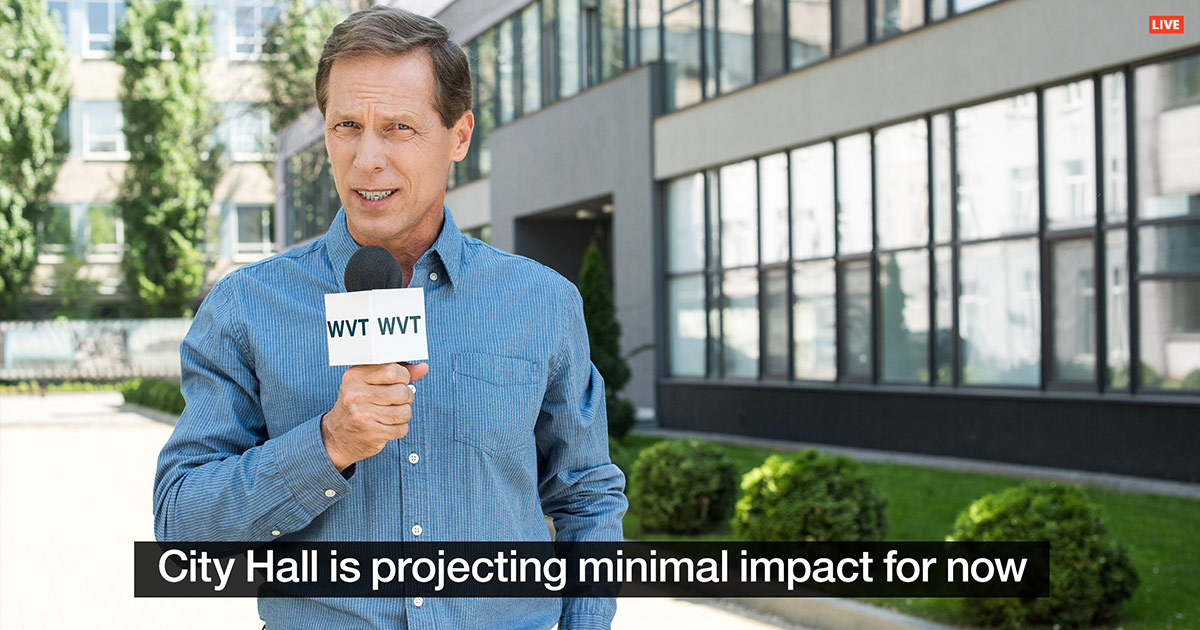

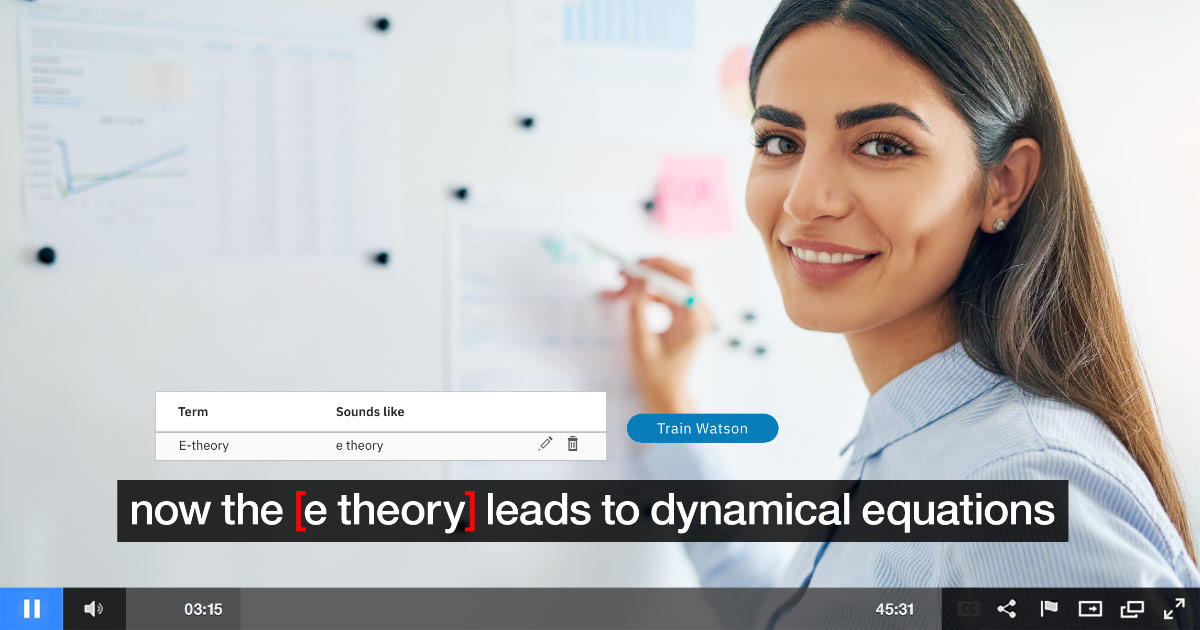

However, delivering accurate captions for live broadcast is both time and resource intensive for broadcast networks, given that production teams must manually transcribe live programming in real-time – which often leads to delayed or incorrect captions. To solve these challenges, IBM launched Watson Captioning – a flexible, scalable, closed captioning software solution that leverages AI to automate the captioning process and uses machine learning to improve accuracy over time. As outlined in this white paper, Captioning Goes Cognitive: A New Approach to an Old Challenge, Watson is bringing greater context to video assets while removing some of the challenge associated with closed captioning.

Through its Live Captioning functionality, Watson Captioning empowers closed captions for broadcast networks, unlocking value from live video content and optimizing the viewer experience. By accurately captioning live video content, broadcasters can provide premium experiences for local viewers, increase accessibility for the hearing-impaired community, and adhere to compliance standards.